On February 17, 2026, Judge Jed S. Rakoff of the Southern District of New York ruled in United States v. Heppner that a criminal defendant's written exchanges with Claude carried neither attorney-client privilege nor work product protection. The case is the first-impression precedent for Claude legal AI use, nationwide in effect.

The answer is narrower than the headlines suggest, and more actionable than most coverage acknowledged. Heppner is the first nationwide privilege ruling against generative AI use by a represented party, and Claude is the platform at its center.

GC AI is the legal AI platform Cecilia Ziniti built for the kind of in-house counsel she used to be at Anki, BloomTech, and Replit. We use Anthropic's models among others, which means we know where Claude shines for in-house legal work and where the gaps still need closing.

Can In-House Counsel Use Claude AI for Legal Work?

Yes, with the same caveats every generative AI platform now carries. ABA Formal Opinion 512, issued July 2024, confirmed that generative AI use by lawyers is permissible under existing ethics rules, so long as counsel maintains three duties: competence, supervision, and confidentiality.

State bars have built on the same framework. California, New York, Florida, and the D.C. Bar all anchor their guidance on those three pillars.

The hard part is the duties themselves. Competence means understanding what Claude can and cannot do, including hallucination patterns and jurisdictional blind spots. Supervision means treating Claude output like a sharp intern's draft, something to be checked rather than signed. Confidentiality means knowing what happens to the text you paste, where it lives, who can read it, whether the platform trains on it, and whether your subscription tier carries enforceable confidentiality terms.

Lawyers can use Claude well when the duties hold. The duties carry the work.

Four Rules for Using Claude Legal AI Safely

Four rules govern the safe use of Claude legal AI for in-house work. None of them are new. All of them carry more weight after Heppner and the August 2025 Anthropic policy shift.

1. Choose the right tier, and elect the right setting. For privileged, regulated, or client-identifying work, deploy on Claude for Work or a legal AI platform built on the Commercial Terms posture. For any consumer-tier use that touches sensitive content, confirm the user did not opt in to model training under the August 2025 update.

2. Document the deployment as counsel-directed. The privilege analysis after Heppner turns on whether the AI functions as an agent of counsel. Counsel-directed use on a platform with enforceable confidentiality is the workaround the court left open. A short policy memo from the GC office, on file, that names the platform and the workflow, is the artifact prong three asks for.

3. Verify every citation against a primary source. Verify against Westlaw, LexisNexis, Casetext, the official code, or the actual contract. Claude hallucinates. Counsel signs what counsel verifies, and the verification step is the workflow.

4. Match the scope to the model. Long-context document analysis plays to Claude's strength. Niche jurisdictional analysis sits outside that strength. Use Claude on the work it is built for, and pair it with a legal-specific platform on the work that requires playbook context, team templates, and verifiable citations.

The rest of this piece unpacks the why behind each rule.

What Claude Does Well for Legal Work

Three Claude strengths matter for in-house work: long context, writing quality, and reasoning across documents. Anthropic built Claude on a long-context model with a writing style that lawyers find unusually compatible with how legal documents read.

Long context. Per Anthropic's model documentation (May 2026), Claude Opus 4.7 and Sonnet 4.6 offer a one-million-token context window through the API and certain enterprise tiers, roughly 1,500 to 2,000 pages in a single session. Consumer-tier behavior on the Claude.ai interface varies by plan and underlying model.

For in-house teams, that ceiling covers a full discovery production, a stack of related contracts, or an entire deal binder. The long-context advantage is operational on first-pass document analysis.

Writing quality. Claude produces prose that reads as drafted by a senior associate. For client memos, executive summaries, and translating dense regulatory text into business-readable English, the first draft typically requires light editing rather than rewriting.

Reasoning across documents. Long-context reasoning means Claude can hold a contract and a playbook in the same window and identify deviations clause by clause. Used carefully and reviewed properly, that workflow saves hours on first-pass NDA review.

In-house teams get real value from Claude on the following work, used safely:

Summarizing a 60-page diligence memo into a three-bullet executive version

Drafting a first pass at a regulatory update for engineering or product leads

Issue-spotting in a contract as a second set of eyes on a clause counsel has already reviewed

Outlining a brief or a board memo before counsel fills in the citations

Translating dense statutory text such as GDPR Article 28, DGCL 203, and the EU AI Act into plain English

Generating training outlines, interview questions, or framework sketches for internal legal education

What unites these use cases is that a competent in-house lawyer reviews the output before anything moves. That review is the entire game.

What United States v. Heppner Changed for Claude Users

For any lawyer using Claude, Heppner is the 2026 update that changes the playbook.

The fact pattern was specific. Bradley Heppner was indicted in October 2025 on securities and wire fraud, conspiracy, false statements to auditors, and falsifying corporate records. After indictment, and after retaining counsel, Heppner used Claude to prepare reports outlining his defense strategy and potential legal arguments. The government moved to compel production of those Claude exchanges. Judge Rakoff held oral argument on February 10, 2026, and ruled on February 17, 2026, that the exchanges were neither privileged nor protected work product.

Rakoff's reasoning ran on three tracks. First, the AI is not an attorney, so communications with it cannot be attorney-client privileged on their face. Second, the confidentiality prong failed on the terms of service in effect at the time. Third, Heppner's counsel had not directed him to use Claude, and self-directed client use is not a counsel-directed channel.

The court left one door open. Under the Kovel doctrine, which extends privilege to accountants, translators, and other agents retained by counsel to help render legal advice, Rakoff acknowledged that counsel-directed use of AI on a platform with contractual confidentiality might preserve privilege. That is the operational workaround, and it is the posture purpose-built legal AI platforms are designed to meet.

For the full breakdown of the ruling and what it means day-to-day, see our deep-dive on the Heppner ruling. The practical takeaway is narrow but actionable: if your Claude use does not satisfy all three Rakoff prongs, assume the chat is discoverable.

Anthropic's August 2025 Policy Shift, and Why It Matters Post-Heppner

Anthropic's August 2025 consumer Claude policy shift changed how Claude legal AI use intersects with Heppner prong two. Coverage of Heppner tends to skip this part of the analysis.

On August 28, 2025, Anthropic updated its consumer Claude terms and introduced a user choice to share chat and coding-session data for model training. Users who opt in get five-year retention on the data they share. Existing users had until October 8, 2025 to make their selection to continue using Claude. Anthropic's privacy center confirms the posture.

The Commercial Terms tier kept the same posture. Claude for Work, Claude for Government, Claude for Education, and API use remain no-train, with separate enterprise data handling. Anthropic was explicit on this distinction.

Here is what this means for Heppner prong two, in plain English:

Free or Pro Claude with opt-in to model training. Chats train the model and live in Anthropic's systems for five years. Heppner prong two fails on the face of the terms.

Free or Pro Claude with opt-in declined. Standard retention, no training. Better, and still subject to consumer-tier indemnities and terms.

Claude for Work or API. No training, enterprise data handling, contractual terms negotiable. This is the closest a raw Claude deployment gets to satisfying Heppner prong two, and the configuration is the customer's responsibility.

The consumer tier serves general consumer needs. Privileged legal work needs different scaffolding.

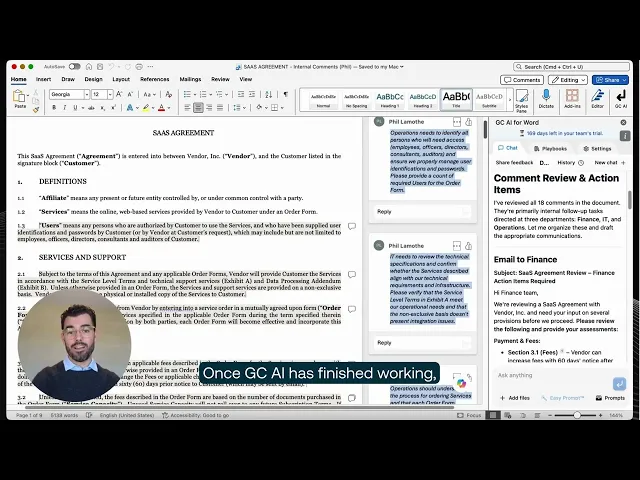

Claude for Word: What Anthropic Launched in April 2026, and Where the Gap Is

On April 11, 2026, Anthropic launched Claude for Word in public beta, with legal contract review listed as the first example use case. Lawyers can now invoke Claude inside Microsoft Word to summarize commercial terms, flag clauses that deviate from standard market positions, make indemnification mutual, and work through reviewer comments without leaving the document.

Claude for Word is real, and it is meaningful. It is also a beta from an AI lab. A workflow product from a legal team is a different thing.

Claude for Word ships today with a Word add-in, contract-review prompts, redlining via Word's tracked changes, and the Anthropic security posture that comes with each subscription tier.

Buyers evaluating Claude for Word should ask whether the team's contract templates and prior matters load by default, whether playbooks tuned to the company's negotiation positions ship out of the box, whether a custom company profile tells the model how the team writes, whether a prompt library calibrated to in-house workflows is included, and whether deployment terms address the Heppner three-part test. A legal-team product is built to close those questions.

Hayley McAllister, Senior Counsel and Head of Commercial Legal at Jasper, put it plainly:

"Once the Word plugin rolled out, I pretty much exclusively started using it for all of my redlining and contract review."

For an in-house lawyer who lives in Word, the live question is whether the AI shows up with the team's templates, playbooks, and prior context already loaded. For a side-by-side on the in-house deployment, see GC AI for Word.

How a Claude-Powered Legal AI Platform Closes the Gap

GC AI is the legal AI platform built for in-house counsel, founded by Cecilia Ziniti, a three-time General Counsel at Anki, BloomTech, and Replit, and used by 1,600+ in-house legal teams including 80+ public companies and 25 unicorns.

GC AI is SOC 2 Type II and SOC 3 certified, GDPR compliant, with zero data retention agreements with OpenAI and Anthropic, and AES-256 encryption.

The simplest way to think about GC AI is Claude with the in-house counsel layer Anthropic does not build, and a privilege posture designed around the Rakoff three-part test.

GC AI uses Anthropic's models as part of its underlying infrastructure, with zero data retention contractually committed for every customer, including non-enterprise tiers. On top of that infrastructure, the platform layer adds:

Files holds your team's contracts, templates, playbooks, and prior matters across sessions, so every chat starts with your team's context loaded.

Projects carries context across conversations within a specific matter, so the second lawyer on a deal keeps what the first lawyer established.

Custom Company Profile encodes your team's voice, templates, and standards, so outputs arrive calibrated to how your team writes.

Playbooks ships with pre-built workflows for NDAs, DPAs, MSAs for SaaS, and MSAs for commercial purchases, with agentic execution.

Exact Quote pulls character-level verbatim text from your uploaded documents, so every claim about what the contract says points to the line in the contract.

Easy Prompt turns plain-language requests into legally optimized prompts, so a non-prompt-engineer GC gets a usable answer on the first try.

GC AI for Word brings Chat2 web research, Skill Library workflows, Files, Projects, and Easy Prompt directly into Microsoft Word.

Danielle Sheer, Chief Trust Officer at Commvault, said it directly on the CZ and Friends podcast:

"What would be really helpful is if there was an entire universe that was like ChatGPT, but built for and made for the legal world and the compliance world: GC AI."

The wedge is the in-house legal universe built around the model.

Claude vs GC AI for In-House Legal Work: Side by Side

Five questions every in-house team eventually asks after the first quarter of using raw Claude:

Does our work train the model?

Can we verify what the AI says against the actual document?

Does the second lawyer on a deal start cold every time?

Do our team's templates and playbooks load by default?

Does the deployment satisfy Heppner's three-part test?

Axis | Consumer Claude (Free / Pro / Max) | Claude for Work / API | GC AI |

|---|---|---|---|

Training on data | Trains only when user opts in (August 2025 update); five-year retention on opted-in data | No training; enterprise data handling | Never trains; zero data retention contractually committed; data isolated per tenant |

Citations | Plausible summaries; no character-level verbatim citations as of May 2026 | Same as consumer | Character-level verbatim citations from uploaded documents |

Workflow context | Starts cold every session | Starts cold every session | Persistent context across sessions, matters, and team templates |

Heppner posture | Fails all three Rakoff prongs | Satisfies prong two when correctly configured | Designed for all three prongs through counsel-directed deployment, contractual confidentiality, and agent-of-counsel posture |

In-house teams switch from raw Claude to a purpose-built legal AI platform when the team grows past three lawyers, when privileged work becomes a regular rather than occasional output, when client data falls under a regulated framework such as HIPAA, GDPR, CCPA, or GLBA, or when the team needs the AI to carry matter context across conversations rather than starting every session cold.

For readers weighing ChatGPT instead, see the sister analysis at ChatGPT for lawyers. For the comparison page, see GC AI vs Claude.

Try GC AI for In-House Legal Work

See how GC AI handles the same prompts you would put into Claude, with SOC 2 Type II and SOC 3 attestation, zero data retention, and the in-house workflow context built in.

Frequently Asked Questions

These questions came directly from in-house counsel in GC AI Classes chats.

Can You Confirm That Information Shared with Claude or GC AI Is Confidential and Not Used to Train the Model?

For Claude consumer tiers (Free, Pro, Max), training only happens when the user opts in under the August 28, 2025 consumer terms update; opting in extends retention to five years on the shared data. Claude for Work, Claude for Government, Claude for Education, and API use are no-train under Anthropic's Commercial Terms. GC AI never trains on customer data, with zero data retention contractually committed for every customer.

Most Vendor or Client Contracts Are Confidential. How Can I Be Sure That Uploading an Agreement to AI Does Not Break Confidentiality?

The answer turns on the platform's contractual posture and configuration, not the model. A consumer-tier deployment carries consumer-tier indemnities. A Commercial Terms deployment (Claude for Work, Claude for Government, API, or a legal AI platform built on those terms) provides enterprise data handling, no training, and contract terms a legal team can negotiate. For privileged work, the platform should be deployed counsel-directed with confidentiality terms reviewed by counsel, per Heppner's three-part test.

How Susceptible Is the AI to Hallucinating Answers, and What Should I Do About It?

Claude hallucinates. So does every general-purpose AI. The mitigation is the verification step: every citation goes against Westlaw, LexisNexis, Casetext, the official code, or the actual contract before counsel signs. A legal AI platform with character-level verbatim citation back to the source (Exact Quote on GC AI) reduces the verification burden by pointing to the line in the document rather than producing plausible-sounding paraphrase.

Does the AI Have Access Across Chats, or Do You Have to Maintain a Single Thread? Is There a Way to Create Projects Like Claude?

Claude's consumer interface starts each session cold. The lawyer who used Claude well yesterday cannot share that context with the lawyer who needs it tomorrow. GC AI's Projects feature carries context across conversations within a specific matter, and Files persists contracts, templates, and playbooks across sessions, so every chat starts with the team's context loaded.

Is GC AI Just an Additional Layer on Top of Claude to Make It a Legal Tool, or Is the Platform More Than That?

GC AI uses Anthropic's models as part of a multi-model backend, with zero data retention contractually committed for every customer and a 20,000-line legal system prompt that encodes in-house counsel context. The product layer (Files, Projects, Custom Company Profile, Playbooks, Exact Quote, Easy Prompt, Skill Library, and Chat2 in Word) is what turns a general-purpose model into a legal AI platform built for in-house teams.