We are excited to announce the In-House Legal Bench, a new comprehensive benchmark designed to evaluate AI performance on legal tasks that reflect the day-to-day work of in-house lawyers.

The benchmark tests whether the AI tools tested, such as GC AI, produce correct, well-structured legal analysis and work product by grading their responses against attorney-developed answer keys. We use the results to drive product improvements for GC AI and to show how it compares to general-purpose AI assistants.

For this benchmark, we evaluated GC AI against three leading AI assistants: ChatGPT (GPT-5.5), Claude (Opus 4.7), and Gemini (3.1 Pro). GC AI outperformed all three across every legal task category, leading the closest competitor, ChatGPT, by 7 percentage points overall.

Our Approach

Focusing on tasks reflecting real in-house legal work, designed by experienced practitioners, in end-user applications

The prompts we used in this benchmark represent the tasks and issues that in-house lawyers are expected to handle and oversee. In-house lawyers - counsel that work for companies as salaried employees, as opposed to lawyers employed by law firms and who typically bill by the hour - serve as primary advisors to the company and often juggle many tasks, including conducting legal research, drafting legal documents, advising on risks associated with new products or services, and managing regulatory compliance programs. They value advice that is direct, actionable, and business-focused because their goals are the company's business goals. That perspective - of the law and legal strategy as an input to company strategy - shapes the questions that in-house lawyers pose and the nature of their tasks and workflows.

Designing and reviewing evaluations that reflect these daily realities takes skill and experience. Our team of six R&D Attorneys with a combined 80+ years of professional experience at leading companies and law firms developed the materials used in this benchmark. Every task and answer key was created and vetted by this team, then implemented in close partnership with GC AI's Applied AI engineering team.

Tasks and Prompts

Designing tasks and prompts

We developed 100 unique in-house legal tasks for this benchmark, which fall into the following 10 categories:

Category | Example Task |

Drafting | Drafting a jurisdiction-compliant return-to-office policy for employees transitioning back from remote work |

Summarizing Documents | Producing an executive briefing of recent trial opinion, distilling key findings and implications from the ruling |

Contract Analysis | Explaining the scope and restrictions of an IP license agreement in plain English |

Legal Research | Describing SEC Schedule 13D beneficial ownership reporting requirements and recent amendments |

Legal Strategy | Assessing CPSC reporting obligations and recall options for a smart home device |

Risk Assessment | Identifying risks in an arbitration clause with no IP carve-outs in a supply agreement |

Comparison / Benchmarking | Comparing supplier codes of conduct across several direct competitors |

Extracting Information/Data | Extracting executive compensation data from a company’s proxy statement into a table |

Regulatory Tracking | Mapping federal consumer protection rules and analogous state laws into a compliance chart |

Checklists | Producing a GDPR compliance checklist for a SaaS company |

Based on the nature of the legal tasks, some were classified into more than one category. For example, a drafting task may involve legal research and would be classified as both a drafting task and a legal research task.

The tasks also cover a wide range of legal topics and domains that fall within an in-house lawyer's scope, including commercial transactions, consumer protection and product safety, corporate and securities work, employment and labor law, intellectual property, international and cross-border issues, litigation and dispute resolution, privacy and data protection, regulatory compliance, and sustainability. We recognize that the nature of in-house legal work spans beyond these topics and domains, and we expect to expand the areas our tasks cover in subsequent studies.

Task Structure

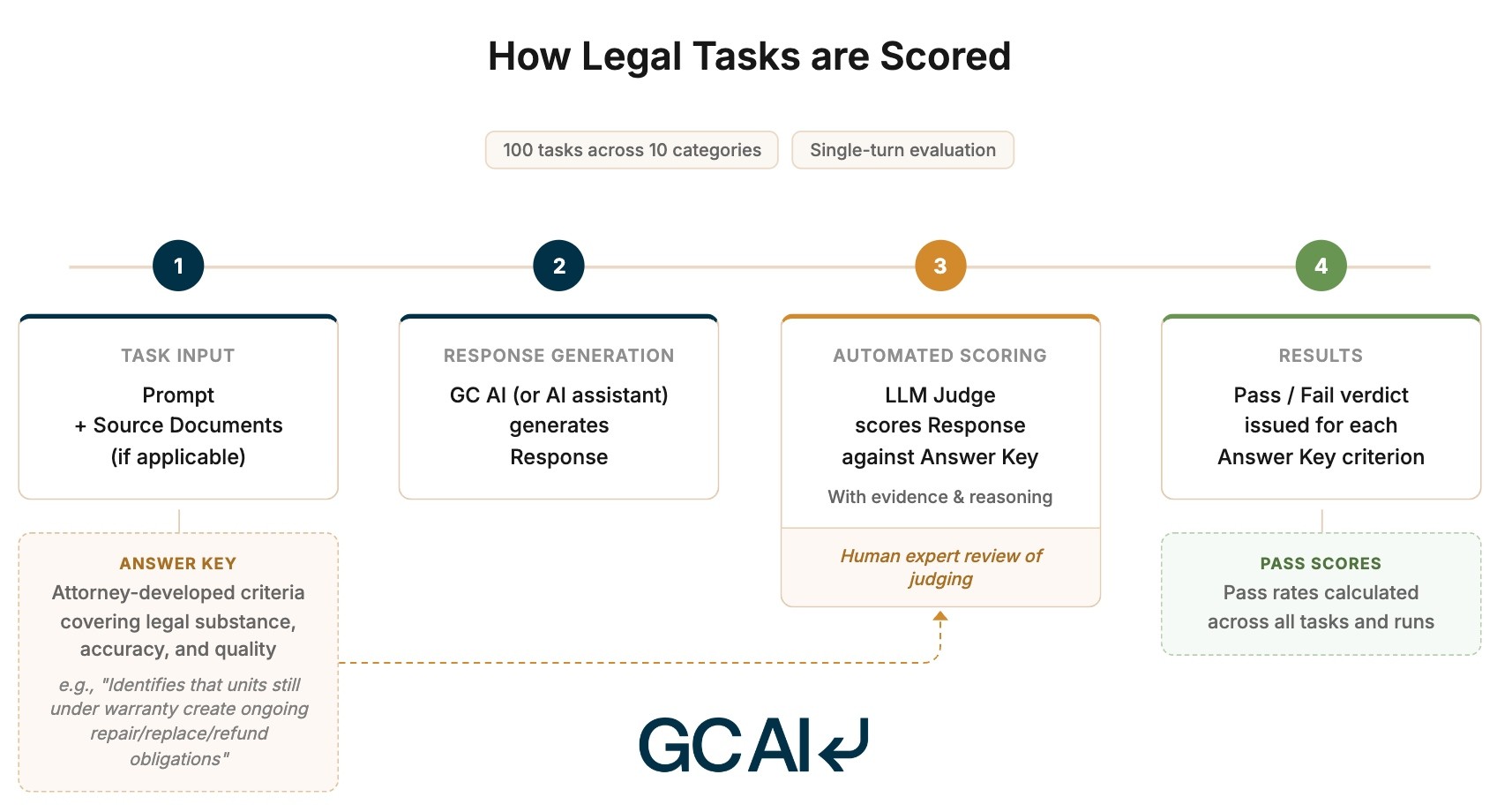

Each task consists of a prompt (what we ask the AI assistant to do) and applicable source documents. These could be contracts, policies, or other additional materials that the AI assistant would typically be given to process the request, provided as URLs, PDFs, or Word files.

We drafted the prompts to reflect how in-house lawyers would query AI tools using concise, natural-language requests. Following this approach, all evaluations in this benchmark consist of a single-turn conversation: a prompt related to the task followed by a response from GC AI (or AI assistant). We did not simplify the tasks we created to make them easier for a tool to complete in a single turn.

Evaluation Methodology

Scoring Rubric

Each task has a distinct answer key, a structured list of criteria that specifies the elements and characteristics of a high-quality, correct response (e.g., correct facts, accurate legal analysis, appropriate language). The answer keys for all our tasks were created by lawyers to be legally accurate and representative of responses that skilled and qualified in-house lawyers would produce or expect to see. In addition to task-specific criteria, several baseline response-quality criteria, which assess for appropriate depth, professional tone, and actionability, are automatically applied to every task. Combined, the answer keys average 12 criteria per task, totaling over 1,200 criteria across our 100 legal tasks.

Each criterion in an answer key describes what the response must include or provide to pass. For example, an answer key may require a response to correctly identify the effective date of Delaware’s new pay transparency law. Including the wrong effective date for this pay transparency law, or omitting a date entirely, would be considered a fail. In another example, a different answer key may require the response to include a detailed pros and cons analysis of three litigation response options, with at least two pros and two cons for each, grounded in the specific facts of the complaint.

A response that offered only generic strategy advice or a cursory analysis, without case-specific details, would fail. The answer keys use clear, concrete language to describe what the response must include to pass and provide explicit quantifiers (e.g., “at least two of”, “and”) when a criterion requires multiple elements for the response to pass.

Since responses also need to be concise and practical, not just accurate, the baseline response-quality criteria, scored on every task, evaluate a response’s substance, tone, and presentation. Does the response reflect what an in-house lawyer would consider to be practical, skillful, and polished work product? An in-house lawyer who simply asks for an initial draft policy to review is unlikely to want a detailed supplemental memo explaining the policy’s components.

Scoring Responses

We used an LLM-as-judge (“LLM Judge”) to score the responses generated by GC AI and the AI assistants for each of the prompts. The LLM Judge was given instructions on how to grade the responses, including instructions on how to interpret and apply the answer keys from an in-house perspective. In addition, for criteria containing multiple elements (e.g., X and Y), the judge was instructed to check that the response contained all required elements to issue a pass. The LLM Judge evaluated the user-facing text response and the artifacts (e.g., a drafted email or generated document) that GC AI or the AI assistants generated for the prompt.

The LLM judge graded each response against the answer key and produced a structured output with criteria-level binary pass/fail verdicts. These were supported by collected evidence and reasoning so our attorneys could review the LLM Judge’s performance.

Using the pass/fail verdicts, we calculated task pass rates by legal task category (e.g., contract analysis, drafting, etc.) as well as an overall pass score across all tasks.

To understand how the LLM Judge’s performance compared to human expert review and address potential concerns about LLM-as-judge reliability, we also manually scored a set of responses that had been previously scored by the LLM Judge. Using this data, we determined there was substantial alignment between LLM Judge scoring and human expert scoring, giving us confidence in our scoring process.

Results: GC AI vs ChatGPT, Claude, and Gemini Across 10 Legal Task Categories

GC AI achieved an overall pass rate of 86.8% across all criteria scored over the 100 tasks in our benchmark, compared to 79.8% for ChatGPT, 68.4% for Claude, and 57.5% for Gemini. GC AI also outperformed all three AI assistants in each of the 10 categories of legal tasks that we measured, with the largest advantages appearing in research-intensive tasks.

Overall Pass Rates

Percentage of answer key criteria passed across 100 legal tasks.

AI Tool | Pass Rate |

|---|---|

GC AI | 86.8% |

ChatGPT | 79.8% |

Claude | 68.4% |

Gemini | 57.5% |

Pass Rates by Legal Task Category

Criteria pass rates grouped by legal task category.

Legal Task Category | Tasks | GC AI | ChatGPT | Claude | Gemini |

|---|---|---|---|---|---|

Drafting | 19 | 87.6% | 83.4% | 74.9% | 66.4% |

Summarizing Documents | 12 | 81.6% | 77.5% | 63.7% | 57.5% |

Contract Analysis | 13 | 82.7% | 72.8% | 66.3% | 42.9% |

Legal Research | 23 | 88.3% | 75.6% | 66.2% | 61.7% |

Legal Strategy | 16 | 86.3% | 84.5% | 63.0% | 58.0% |

Risk Assessment | 26 | 89.0% | 84.2% | 71.1% | 59.2% |

Comparison/Benchmarking | 9 | 91.4% | 84.7% | 81.4% | 72.9% |

Extracting Information/Data | 24 | 82.0% | 76.9% | 57.0% | 56.3% |

Regulatory Tracking | 11 | 88.6% | 73.5% | 68.2% | 45.0% |

Checklists | 13 | 89.9% | 81.9% | 73.4% | 59.3% |

Note: Some legal tasks are associated with more than one category.

GC AI showed its largest advantage over the AI assistants on tasks that require specialized research abilities (regulatory tracking, legal research, and checklists), where the tool needs to locate, synthesize, and organize current regulatory requirements across multiple jurisdictions into clear, actionable outputs for lawyers. GC AI’s approach led to more accurate and better-sourced regulatory analysis, and its stronger performance came from presenting findings more effectively and grounding them in authoritative sources (e.g., government, court, and regulatory authorities) that lawyers trust and rely on.

In drafting and contract analysis, GC AI outperformed all three AI assistants by meaningful margins. While ChatGPT showed comparable performance on issue-spotting, GC AI demonstrated clear advantages in legal accuracy, extracting quoted text from documents (using Exact Quote), and drafting higher quality responses and language. This was driven in part by GC AI’s approach to handling documents, where stronger document matching and analysis enabled it to produce output that was more precise, accurate, and useful.

The narrowest gap appeared in legal strategy and risk assessment, where ChatGPT came closest to GC AI's performance. Claude and Gemini trailed both GC AI and ChatGPT by substantially wider margins in these categories. Despite the closer overall scores, GC AI still showed stronger performance in the quality and conciseness of its responses, including compared to ChatGPT.

Across the full benchmark, GC AI consistently produced responses that were useful, professional, and actionable. It provided material, relevant information without unnecessary detail or verbosity, demonstrating a clear pattern that held even in areas where other AI assistants matched or slightly exceeded its analytical scores.

What These Results Mean for In-House Counsel

These results demonstrate that a purpose-built legal AI platform like GC AI delivers measurable advantages over general-purpose AI assistants. While those AI assistants may demonstrate strong general reasoning capabilities, the work of in-house lawyers requires AI tools to deliver more than just reasoning. They must find and synthesize the right authoritative sources, produce responses and outputs that demonstrate an understanding of legal documents, and analyze and interpret legal issues in a manner that reflects precise legal thinking. And, importantly, they must be able to present their responses and deliverables in a way that resonates with and is trusted by lawyers.

As AI assistants and foundation models continue to improve, the baseline for legal reasoning will rise across all platforms. This benchmark points to areas where GC AI can, and will, make deeper investment, such as reasoning support, analytical frameworks, and continued expansion of its agentic capabilities, to deliver a more powerful platform for its users. GC AI is built for in-house legal teams, and these results provide both validation of that approach and a roadmap for where to invest next.

Notes

Examples of our in-house legal tasks and answer keys are available upon request. Please reach out to us here. We look forward to working with partners in future benchmarking efforts.

Frequently Asked Questions

Which AI assistant scored highest on the In-House Legal Bench?

GC AI scored 86.8% across 100 in-house legal tasks, ahead of ChatGPT (GPT-5.5) at 79.8%, Claude (Opus 4.7) at 68.4%, and Gemini (3.1 Pro) at 57.5%. GC AI led in every one of the 10 task categories, with the largest margins in research-intensive tasks like regulatory tracking and legal research.

How well did ChatGPT perform for in-house legal work?

ChatGPT (GPT-5.5) scored 79.8% on the In-House Legal Bench, the highest of the three general-purpose AI assistants tested. ChatGPT came closest to GC AI in legal strategy and risk assessment, and showed comparable performance on issue-spotting. GC AI outperformed ChatGPT in legal accuracy, extracting quoted text from documents, and drafting quality.

Can general-purpose AI like ChatGPT, Claude, or Gemini handle in-house legal work?

It depends on the task. On the In-House Legal Bench, ChatGPT scored 79.8%, Claude 68.4%, and Gemini 57.5%, compared with GC AI at 86.8%. The largest gaps appeared in research-intensive tasks like regulatory tracking, legal research, and checklists, where general-purpose models trailed in grounding answers in authoritative sources.

Which AI is best for legal research?

GC AI scored highest in research-related tasks (regulatory tracking, legal research, and checklists) where its margin over ChatGPT, Claude, and Gemini was largest. The advantage came from grounding answers in authoritative sources.

Which AI is best for contract analysis?

GC AI outperformed ChatGPT, Claude, and Gemini in contract analysis and drafting tasks. ChatGPT showed comparable performance on issue-spotting, while GC AI demonstrated clear advantages in legal accuracy, extracting quoted text from documents using Exact Quote, and drafting higher-quality language.

What categories of legal tasks did the benchmark test?

Ten categories: drafting, summarizing documents, contract analysis, legal research, legal strategy, risk assessment, comparison and benchmarking, extracting information and data, regulatory tracking, and checklists. The categories cover in-house counsel work across a range of legal topics, including commercial, employment, IP, privacy, securities, and regulatory law.

How were the responses graded?

Each task had an attorney-built answer key averaging 12 pass/fail criteria to assess the accuracy and quality of responses . An LLM-as-judge scored every response against the rubric, and the GC AI R&D team manually validated a sample to confirm the LLM Judge's alignment with human expert review.