In 2020, reviewing a vendor MSA ran three hours. In 2026, with an AI contract review platform, the first pass runs in roughly twenty minutes: clause extraction, playbook comparison, risk flagging, and a drafted redline inside Word. The GC still signs off by Wednesday. The data processing addendum still reads like it was written by a security team that does not speak your liability language. The indemnification cap is still 1.5x fees when your team usually holds at 2x. What changes is where the lawyer spends the hours, which shifts from the first read to the judgment calls.

The in-house market in 2026 fills with options for that first read: Spellbook, Ivo, LegalOn, LEGALFLY, Gavel Exec, Definely, Harvey, Luminance, Ironclad, and GC AI. Each platform claims to be purpose-built for in-house counsel. This guide is the shortcut to the right pick, covering what each platform does, how to evaluate them, and what separates a platform that earns a place in your daily stack from one that stays in the trial folder.

This guide is for the people reviewing contracts at scale in 2026: in-house counsel, legal operations leaders, contract managers, and finance or operations leaders with legal-adjacent sign-off. Whether you are a solo GC at a Series C, a three-person team at a mid-market SaaS company, a Legal Ops manager inside a public company, or a finance leader who touches MSAs every week, the same five capabilities separate a platform that changes your workflow from one that looks good in a demo and nothing more.

We built GC AI because we lived this work. Our founder Cecilia Ziniti was General Counsel three times, at Anki (consumer robotics), Bloomtech (career education), and Replit (developer tools and cloud coding), before starting the company. Across all three, she watched her team spend more time on contract review than on the legal judgment calls the business needed from her. Contract review was the first workflow she wanted AI to change, and she built GC AI on the conviction that legal AI should compound in-house lawyers and protect the judgment they are paid for.

What Is AI Contract Review?

AI contract review is the category of legal AI platforms that use generative AI models, trained on or augmented with legal context, to perform the core tasks in-house counsel do on every contract: issue-spotting, redlining, clause extraction, risk flagging, summary generation, and citation-backed answers to specific questions about a document. Where a human lawyer reads a 60-page MSA, identifies the risky indemnification clause, compares it to the team's standard position, drafts a redline, and writes a plain-English summary for the business stakeholder, AI contract review does the same work in a fraction of the time, with the lawyer as reviewer rather than drafter.

AI contract review overlaps with three adjacent categories. Knowing which is which saves an in-house team a quarter of misfit procurement.

Contract lifecycle management (CLM) platforms like Ironclad, Docusign CLM, and LinkSquares move contracts through their operational flow: intake, negotiation, execution, storage, and obligation tracking. Some CLMs layer AI on top. The primary product is still workflow, with analysis as a secondary capability.

Research-first legal AI platforms like Thomson Reuters CoCounsel and Lexis+ AI sit on top of Westlaw and Lexis respectively, optimized for case law questions. Research-first platforms fit teams whose primary work lives in case law.

General-purpose AI like ChatGPT, Claude, and Microsoft Copilot can read a contract and return plausible analysis. Without a legal system prompt, playbook enforcement, or character-level citation against the source document, the output is a draft that needs heavy rework before it reaches a business stakeholder.

An in-house-workflow platform like GC AI does contract review as the primary product, then extends across research, drafting, matter memory, and intake so a lean legal team covers the full in-house week inside a single platform. That is the distinction between an adjacent category and the platform an in-house team uses every day.

How AI Contract Review Works

Modern AI contract review platforms share a common architecture, even when the user-facing product looks different. Understanding the workflow helps you evaluate whether a specific platform will hold up on your real work.

Ingestion. The platform reads the document, typically a PDF or Word file, and converts it into text the language model can process. The quality of ingestion matters more than it sounds. Scanned PDFs, poorly formatted DOCX files, and contracts with embedded tables can all degrade analysis downstream.

Contextualization. The platform applies your organization's context to the document: your standard positions, your playbook, prior related agreements, the counterparty's paper from previous deals. This is where in-house-purpose-built platforms diverge sharply from generic AI. A platform that remembers your preferred indemnification cap, your standard limitation of liability language, and your risk tolerance on data processing produces redlines your whole team can stand behind. A platform without that context produces generic legal analysis that needs rework before it reaches a business stakeholder.

Analysis. The language model, augmented with legal-specific prompting and sometimes retrieval-augmented generation from a legal corpus, performs the actual review: identifying clauses, flagging deviations, suggesting language, extracting key terms, and producing summaries.

Citation and verification. The best platforms link every output back to the source document at the character level. When the AI claims the indemnification cap is 2x fees, you can click the citation and see the exact passage in the source PDF. Without this verification layer, AI contract review is a draft that a lawyer must fact-check line by line, which defeats the purpose.

Delivery. The output shows up where you work: inside Microsoft Word as a redline, in a chat interface as a summary, in a deal room as a structured extraction. The surface matters. A platform that requires you to leave Word, upload the document to a web app, get the analysis, and copy the redlines back into Word is a platform your team will use half as often as one that lives inside Word itself.

Why In-House Contract Review Breaks Without AI

Three structural problems separate in-house contract review from law firm practice: contract volume outpaces team headcount, consistency breaks down without encoded standards, and there is no senior partner to check work before it reaches the business. Legal AI is uniquely suited to solve all three.

The volume is misaligned with the headcount. In-house legal departments review hundreds or thousands of contracts a year with small teams. Without leverage, every contract steals time from strategic work: board counsel, regulatory matters, employment questions, deal structuring. The math forces a choice between reviewing every contract carefully and doing the strategic work the company needs from legal. AI contract review removes the forced choice.

Consistency is impossible without encoded standards. Five lawyers reviewing the same NDA through the same playbook should produce the same redlines. In practice, they do not. Junior attorneys flag different issues than senior attorneys. The same senior attorney flags different issues on a Friday afternoon than they would on a Tuesday morning. AI contract review, when backed by a properly configured playbook, produces the same output every time. Your institutional knowledge lives in the system, where the whole team can apply it.

There is no senior partner checking your work. Law firm associates send their work to a partner who red-pens it before it reaches the client. In-house counsel do not have that review layer. The GC is the final reviewer, and whatever leaves legal goes directly to the business, the board, or opposing counsel. AI contract review adds a second pair of eyes that does not get tired, does not miss clauses, and does not forget last quarter's negotiated position on data retention.

The 5 Capabilities That Decide In-House AI Contract Review

In-house teams that keep a platform after the trial share a consistent list of reasons. Here are the five.

Playbook Automation

Character-Level Citation

Word-Native Workflow

Matter Memory

Multi-Document Agentic Review

Each section below covers what to look for, what questions to ask in a demo, and how the leading platforms approach it.

Playbook Automation

Strong AI contract review platforms let you encode your organization's standard positions once, then apply them to every incoming contract automatically. Upload a standard NDA. Define your position on indemnification caps, liability limitations, confidentiality carve-outs, and data retention. The next NDA that hits your inbox is reviewed against those positions, deviations are flagged, and suggested redlines are drafted before you open the file.

GC AI's Playbooks are one implementation of this pattern, purpose-built for in-house workflow. A Playbook for NDA review might check: is the indemnification cap at or above your standard threshold, is there a liability carve-out for IP infringement, is the data retention period within your tolerance, does the governing law match your preferred jurisdiction, are the confidentiality carve-outs standard. The Playbook applies those checks to every incoming NDA automatically, flags deviations, and drafts redlines before you open the file. Pre-built playbooks ship for NDAs, DPAs, SaaS MSAs, and employment agreements. Custom playbooks train on your team's prior agreements, encoding the judgment that usually lives in the senior attorney's head.

Other platforms, including Spellbook and LegalOn (which launched its My Playbooks feature in January 2025), have their own implementations. The question to ask in a demo is whether the playbook encodes your team's positions (right answer) or applies a generic legal standard (wrong answer).

Character-Level Citation

When an AI summarizes a contract and tells you the cure period is thirty days, you need to know whether that is accurate. Platforms that paraphrase without citation force you to re-read the contract to verify every claim, which eliminates the time savings. Platforms that cite at the paragraph level help but still require you to scan the paragraph. The strongest category of AI contract review platforms cite at the character level: click the citation, see the exact passage highlighted in the source document.

GC AI's Exact Quote is one implementation of this pattern. Every AI output links to the verbatim language in the source contract. When your name is on the memo that goes to the CEO, character-level citation is the difference between forwarding AI output with confidence and spending an hour verifying it. Anything less than character-level is a confidence tax on every output.

Word-Native Workflow

Lawyers draft and redline in Microsoft Word. A contract review platform that requires you to leave Word, upload the document to a web interface, get the review, and copy the redlines back into Word is a platform your team will use inconsistently. Platforms that live inside the surface where legal work already happens earn more consistent daily use.

GC AI for Word brings the full platform into Word as an Add-in. Chat2 runs web research inside the document. Easy Prompt drives one-click legal prompting. Playbooks execute agentic contract review on the document you are drafting. You never leave Word. Spellbook is strong on the firm-side of this category. The question to ask is whether the Word integration covers your full workflow, or only drafting.

Matter Memory

Contract review typically spans multiple documents. A vendor contract ties to a DPA, a side letter, a security addendum, and a prior year's MSA. A platform that forgets the related documents between chats forces you to re-brief the AI on every session. A platform with persistent matter memory remembers the parties, the counterparty's paper, the negotiated positions, and your prior guidance across every conversation.

GC AI's Projects carry this memory. Upload the deal documents once, and two weeks later the AI still knows which MSA governs, which indemnification cap was negotiated, and what was flagged in the last round. For complex matters, matter memory is the capability that turns AI from a one-shot task into a deal team member.

Multi-Document Agentic Review

The next generation of AI contract review reads across documents, extending the analysis beyond a single file. When counsel asks whether a new vendor agreement conflicts with existing DPAs, a strong platform analyzes the full document set agentically, identifies the conflict, and surfaces the specific clauses at issue. GC AI combines Projects (matter memory across documents), Playbooks (consistent review standards), and agentic web research in a single workflow built for in-house use cases. Spellbook's Associate and Harvey's Workflow Agents compete in adjacent firm-side territory.

The five capabilities above are category criteria that any in-house-grade platform should meet. Any platform an in-house team evaluates in 2026 should deliver on playbook discipline, character-level citation, Word-native workflow, matter memory, and multi-document review. Teams that benchmark every short-listed platform against the same five criteria, on their own contracts, make better decisions than teams that rely on demo impressions.

How GC AI Covers All Five Capabilities

Playbooks encode your positions and apply them to every incoming contract. Exact Quote verifies every citation against the source document at the character level. GC AI for Word brings the full platform into the surface where drafting already happens. Projects carry matter memory across every chat and every matter. Playbooks paired with agentic Research handle multi-document review across NDAs, DPAs, MSAs, and side letters in a single workflow.

The AI Contract Review Platform Landscape in 2026

Inside the AI contract review market, five platform types serve different in-house use cases: purpose-built legal AI, Word-native contract drafting, enterprise law firm platforms, dedicated contract review, and CLM with AI layered on. In-house teams commonly run platforms from more than one type.

Platform | Built For | Pricing | Trial | Seat Minimum |

|---|---|---|---|---|

GC AI | In-house legal teams | $500/seat/month | 14 days, no credit card | None |

Spellbook | Firm-side transactional attorneys | Not published | Limited via sales | Sales-led |

Gavel Exec | In-house counsel, Word-native | Not published | 25-query free trial, no credit card | Not disclosed |

Harvey | Large law firms, expanding in-house | Not published, enterprise | Via sales | Enterprise |

LegalOn | Dedicated contract review | Enterprise | Via sales | Enterprise |

Luminance | Dedicated contract review | Enterprise | Via sales | Enterprise |

Kira Systems | Dedicated contract review | Enterprise | Via sales | Enterprise |

Ironclad AI | CLM with AI | Enterprise, usage-based | Via sales | Enterprise |

Legalfly | In-house, GDPR-focused | Not published | Via sales | Not disclosed |

ChatGPT Business | General-purpose AI | $20–$25/user/month | Free tier | None |

Purpose-built legal AI for in-house counsel. GC AI defines this category. It is designed end-to-end for in-house workflow: contract review, playbook automation, research, matter memory, Word drafting, and intake. The system prompt, tone, and output style are calibrated for a business-stakeholder audience.

The category covers the broadest slice of an in-house lawyer's week. 1,500+ in-house teams, 80+ public companies, and 25 unicorns use GC AI daily. The platform was built by Cecilia Ziniti, a three-time General Counsel who ran in-house legal at Anki, Bloomtech, and Replit before starting the company.

Two other platforms position for in-house use. Ivo is an AI Contract Intelligence platform for enterprise legal teams, with three products (Intelligence, Review, Assistant) and Word-native redlining. Legalfly positions as the "legal operating system for corporates," with document anonymization before analysis, ISO 27001 and SOC 2 Type II certifications, regulatory monitoring across 60+ jurisdictions, and a Word Add-in.

US in-house buyers weighing these three against GC AI can compare on GC AI's publicly documented three-time GC founder, published $500/seat/mo pricing with a 14-day self-serve trial, 3,000+ lawyers taught through GC AI's CLE-eligible classes, and US customer scale across SaaS, fintech, cybersecurity, consumer, and retail sectors.

Word-native contract drafting. GC AI for Word brings the full GC AI platform into Microsoft Word: Chat2 research, Playbooks for agentic redlining, Projects for matter memory, Easy Prompt for one-click legal prompting, and Exact Quote for character-level citation, without leaving the document. Spellbook is the firm-side alternative, with Review, Draft, Ask, Benchmarks, Associate, and a Clause Library. Gavel Exec positions for in-house counsel with Word-native review, drafting, and redlining, plus Playbooks, Benchmarking, and Projects, and offers a 25-query free trial without a credit card.

For in-house teams whose week spans contract review, employment questions, privacy reviews, regulator letters, and research, GC AI for Word is the pick. It pairs Word integration with the full legal AI platform, rather than a drafting plug-in. Spellbook's strength is firm-side transactional work. See the full breakdown in GC AI vs Spellbook.

Enterprise law firm platforms. Harvey is the leader in this category, built for AmLaw firms doing litigation, M&A, and cross-jurisdictional advisory work. The product suite reflects that firm-side origin: Vault for large-scale document diligence, Assistant for drafting, Knowledge for cross-domain research, and Workflow Agents for custom automations. Harvey extended into in-house in 2026, but the product DNA stayed law-firm: the workflows the tool optimizes for, the partner-associate dynamics, and the billable-hour math all point back to firm practice. Pricing is enterprise and not published.

When a Fortune 500 legal department with a dedicated legal ops function and procurement cycle wants a firm-adjacent platform, Harvey fits. For in-house teams under 20 lawyers, a platform built from day one for in-house workflow delivers faster time to value. See the full breakdown in GC AI vs Harvey.

Dedicated AI contract review. LegalOn is a long-standing player here, with 8,000+ customers, a Word Add-in, and a seven-product suite spanning Review, Assistant, Matter Management, Knowledge Core, Agents, Translate, and Word integration. LegalOn launched its My Playbooks feature in January 2025.

GC AI's Playbooks occupy the same space: agentic, repeatable contract review against your team's standards, pre-built for NDAs, DPAs, and SaaS MSAs, customizable on your prior agreements. The difference is coverage. GC AI pairs Playbooks with research, employment, privacy, and cross-domain work that hits an in-house desk all week. A platform dedicated to contract review handles one slice well. An in-house-workflow platform handles the full slice in one product.

Other platforms in the dedicated category include Luminance, Kira Systems, and Sirion. These are typically enterprise-priced with long implementation cycles, well-suited to large legal teams or M&A-heavy work where contract volume justifies setup cost.

CLM with AI layered on. Ironclad AI, Docusign CLM, and LinkSquares lead this category. LinkSquares offers LinkAI for conversational contract searching, 120+ auto-extracted dates and clauses, and a unified drafting-negotiation-analytics workflow.

CLMs move contracts through their lifecycle: intake, routing, negotiation, execution, storage, and obligation tracking. AI is a capability layer on top of a workflow product. In-house teams that run a CLM typically also run a legal AI platform, because the two solve different problems. CLM moves the paper. Legal AI does the lawyer's analysis, redlining, research, and cross-domain work.

Legal research platforms. Thomson Reuters CoCounsel and Lexis+ AI are research-first, grounded in Westlaw and Lexis respectively. They are the right pick for teams that live in case law. For most in-house teams, research is a supporting capability alongside a primary contract review platform.

General-purpose AI. Claude for Work, ChatGPT Business, and Microsoft 365 Copilot are horizontal platforms with legal use cases. Useful for non-confidential first drafts and brainstorming. None replaces a legal AI platform on confidentiality, citation discipline, or the legal system prompt that does most of the quality work on a purpose-built platform.

Other platforms that surface in AI contract review searches include LegalSifter, Legartis, LexCheck, Icertis, Definely, and Robin AI. Each serves slices of the market, from high-volume enterprise clause extraction to human-in-the-loop managed review. Evisort, a former standalone AI contract review product, was acquired by Workday in 2024 and absorbed into that platform.

Acquired and absorbed. LawGeex pioneered AI contract review and still surfaces in search results, but it no longer operates as a standalone product. Robin AI absorbed its enterprise contracts in February 2023, and LegalSifter picked up a strategic group of clients that September. Evisort followed a similar path, getting acquired by Workday in 2024 and folded into that platform. In-house teams evaluating AI contract review in 2026 should focus on platforms that are actively developed and marketed.

How to Evaluate AI Contract Review Software

To evaluate AI contract review software, run eight checks on the short list. Each section below covers what to look for and what questions to ask the vendor.

Bring Your Real Documents

Test the platform on a live NDA, a vendor MSA from last week, an employment question you had to research, and a privacy addendum that raised a flag. Do not let the vendor pick the test contracts. Vendors know which documents their platform handles well.

Test the Playbook Discipline

Upload your team's standard NDA. Define three to five positions the team holds: indemnification cap, liability limitation, data retention, confidentiality carve-outs, governing law. Run an incoming NDA against the playbook. Does the AI flag the deviations your senior attorney would flag? Does it suggest redlines you would actually accept?

Verify the Citations

Ask the AI a specific factual question about a long contract. Check whether the citation is accurate to the exact text, or whether the AI paraphrased and approximated. Character-level citation is what earns trust on work that goes to the CEO or the board.

Test the Word Experience

If the platform has a Word integration, use it for your actual drafting. Redline a clause. Ask a research question without leaving the document. Generate a summary. If you catch yourself leaving Word to get a better result, the Word integration is not production-ready.

Read the DPA

The data processing agreement is where the vendor discloses how your data is used. Confirm the vendor's zero data retention position with its underlying model providers, its SOC 2 Type II certification, GDPR compliance, and AES-256 encryption at rest. Procurement will ask. The answers should be documented in writing and stored where procurement can pull them.

Audit AI Claims Before You Repeat Them

Platforms and vendors sometimes overstate AI capability. For public companies, repeating overstated claims in disclosures, investor materials, or marketing creates AI washing risk, a disclosure issue the SEC now reviews. Test what the platform produces before describing it upstream. Former SEC enforcement partner Rebecca Fike walked through what regulators look for when they reconstruct a deal on CZ & Friends with Cecilia Ziniti.

Talk to an Actual Customer

Ideally an in-house customer at a team that looks like yours. The workflows are different enough that a firm-side testimonial does not tell you how the platform holds up on in-house work. Ask the vendor for a reference from a team that looks like yours: similar size, similar industry, similar contract volume.

Measure Time to Value

Can you demonstrate quality on your real work in week one of a trial, or does the platform require a quarter of implementation before it produces output you can act on? In-house teams cannot afford a quarter of setup. If the vendor cannot show value in week one, the platform is not the right fit for a lean legal team.

The Role of AI Education and Team Adoption

Prompting, auditing AI output, building playbooks, and understanding hallucination risk are the new skill layer every in-house lawyer needs in 2026. Buying the platform is the easy part. Fluency in how to use it is what compounds the investment.

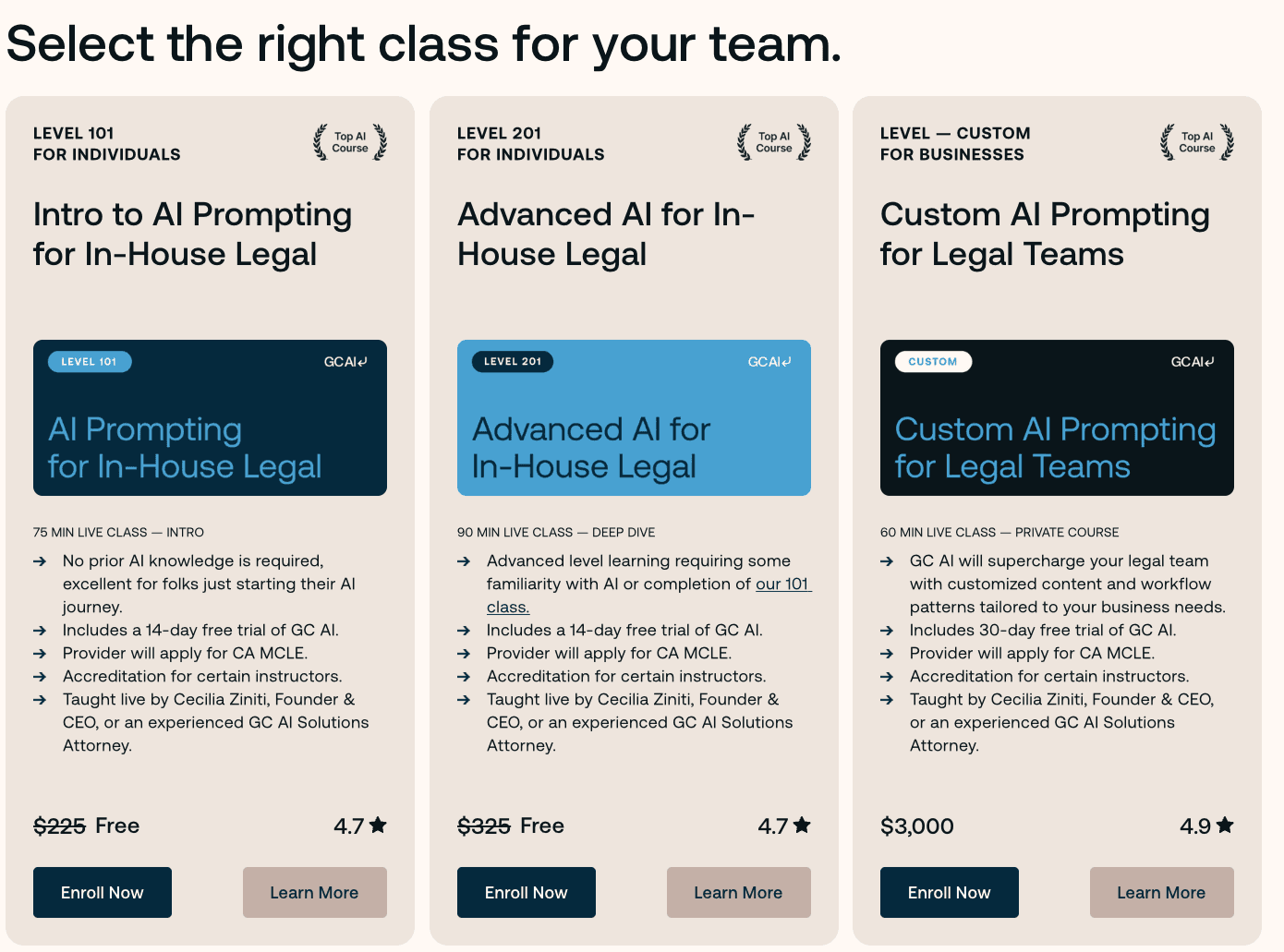

GC AI has taught 3,000+ lawyers through its free, California CLE-eligible classes, led by former general counsels. The 101 course teaches AI prompting fundamentals for in-house counsel. The 105 course walks through AI-assisted redlining and drafting inside Microsoft Word. The 106 and 107 courses teach teams how to build and deploy Playbooks for automated contract review.

GC AI also ships ready-made prompts that translate legal work for business stakeholders. The Action Items from Client Alert prompt takes a law firm client alert and returns a tailored action list for the CFO, Head of People, or VP of Product. It is the kind of translation that used to cost an hour of rewriting every time a client alert hit the inbox.

Platforms without real training programs get less usage, regardless of capability. Adoption follows education. Buying a legal AI platform with a real curriculum attached is the closest thing to buying both the product and the change-management team in one contract.

Join the 3,000+ lawyers learning AI legal skills with GC AI.

What In-House Teams Measure After Adopting AI Contract Review

According to GC AI's December 2025 customer survey of more than 100 active users, lawyers using AI contract review report saving an average of 14 hours per week and a 14% reduction in outside counsel spend.

The ACC Law Department Management Benchmarking Report puts median in-house outside counsel spend at $1.8 million per department, which gives that reduction real scale. 97.5% of survey respondents reported seeing value before the end of their first month.

The customer base for in-house AI contract review platforms skews heavily toward SaaS and developer-tools, fintech and payments, cybersecurity, consumer and DTC, and apparel and retail.

Start With One Contract That Matters

The contracts that decide whether a platform earns a place in your stack are the ones with non-standard indemnification, unusual non-compete language, or counterparty paper that tests your team's standards. A platform that handles those earns daily use. A platform that does not stays in the trial folder.

Run GC AI on one of those contracts this week.

A 14-day free trial starts with no credit card, no procurement overhead, and no seat minimum. If you would rather see the platform on your team's workflow with a Solutions Attorney, book a demo.

Frequently Asked Questions

How Accurate Is AI Contract Review?

Accuracy depends heavily on the platform and the task. Platforms with character-level citation against uploaded documents, a legal-specific system prompt, and playbook-driven review produce more reliable output than general-purpose AI. According to GC AI's December 2025 customer survey, outputs from a purpose-built legal AI platform reflect a 21% greater perceived accuracy than generalist AI on the same legal tasks. The industry standard is still that a human lawyer reviews AI output before it goes to a counterparty or a business stakeholder.

Is AI Contract Review Safe for Confidential Documents?

With the right platform, yes. Enterprise-grade AI contract review platforms are SOC 2 Type II and SOC 3 certified, GDPR compliant, with zero data retention agreements with their underlying model providers, and AES-256 encryption at rest. GC AI runs on OpenAI, Anthropic, Cohere, Reducto, and Google, with zero data retention agreements with OpenAI and Anthropic. Consumer AI products like the free tier of ChatGPT use conversations for model training by default, which creates confidentiality risk for client documents. Users can opt out, but the default exposes attorney work product to training data the organization does not control.

What Is the Best AI Contract Review Software for In-House Counsel?

GC AI is a legal AI platform purpose-built for in-house counsel, used by 1,500+ in-house legal teams across 53 countries. Key capabilities for contract review include Playbooks for agentic repeatable review against your team's standards, Exact Quote for character-level citation from source documents, GC AI for Word for Word-native workflow, and Projects for persistent matter memory. Pricing is published at $500 per seat per month with a 14-day free trial and no seat minimum.

How Do I Measure AI Contract Review ROI for My CFO?

The two numbers CFOs care about are time saved per lawyer and outside counsel spend reduction. Per GC AI's December 2025 customer survey of 100+ in-house teams, lawyers report saving 14 hours per week and a 14% reduction in outside counsel spend. Applied to the ACC's reported $1.8 million median in-house outside counsel spend, a 14% reduction translates to roughly $252,000 in annual savings. Build the CFO case on three line items: hours redirected to strategic work, dollars not spent on outside counsel, and work kept in-house.

Can AI Contract Review Maintain Attorney-Client Privilege?

Privilege protection depends on the platform's data handling. Enterprise-grade AI contract review platforms maintain zero data retention with their model providers, SOC 2 Type II certification, GDPR compliance, and AES-256 encryption. GC AI maintains zero data retention with OpenAI and Anthropic. Consumer AI products that use conversations for model training by default break the privilege assumption. Read the DPA. Verify zero data retention. Confirm the trust center.

What Is the Hallucination Risk for In-House Contract Review in 2026?

Hallucination risk is highest on platforms without character-level citation against the source document. Platforms with character-level citation make hallucination verifiable rather than invisible. Click the citation, see the exact passage highlighted in the source PDF. The industry standard remains that a human lawyer reviews AI output before it leaves legal.

For public companies, the adjacent risk is AI washing: overstating what AI does in disclosures, investor materials, or marketing. The SEC now treats AI washing as a disclosure problem with enforcement consequences. Former SEC enforcement partner Rebecca Fike walked through what regulators look for on CZ & Friends with Cecilia Ziniti here.

Does AI Contract Review Replace Lawyers?

No. AI contract review augments in-house lawyers by automating the rote parts of contract analysis, freeing lawyers to focus on business counseling, deal structuring, and strategic work. Recent ACC Law Department Benchmarking data shows that in-house teams adopting AI contract review typically keep more work in-house, expanding what a given team can cover instead of cutting headcount.

How Much Does AI Contract Review Cost?

Pricing varies widely, and the number on the invoice is only part of the cost. Platforms with published pricing and a self-serve trial cut procurement to days. Platforms that gate pricing behind a sales conversation can stretch procurement to months, before the team has even confirmed the platform works on their contracts.

GC AI publishes pricing at $500 per seat per month with a 14-day free trial, no credit card, and no seat minimum. A solo GC can be in the product the same afternoon. Team and enterprise plans add SSO, shared Playbooks, and managed procurement support for larger teams.

Firm-side platforms like Spellbook and enterprise platforms like Harvey require a sales conversation. Dedicated contract review platforms like LegalOn and Luminance are enterprise-priced. General-purpose AI like ChatGPT Business runs $20-$25 per user per month. It is a productivity platform with legal use cases, sitting in a different lane from legal AI.